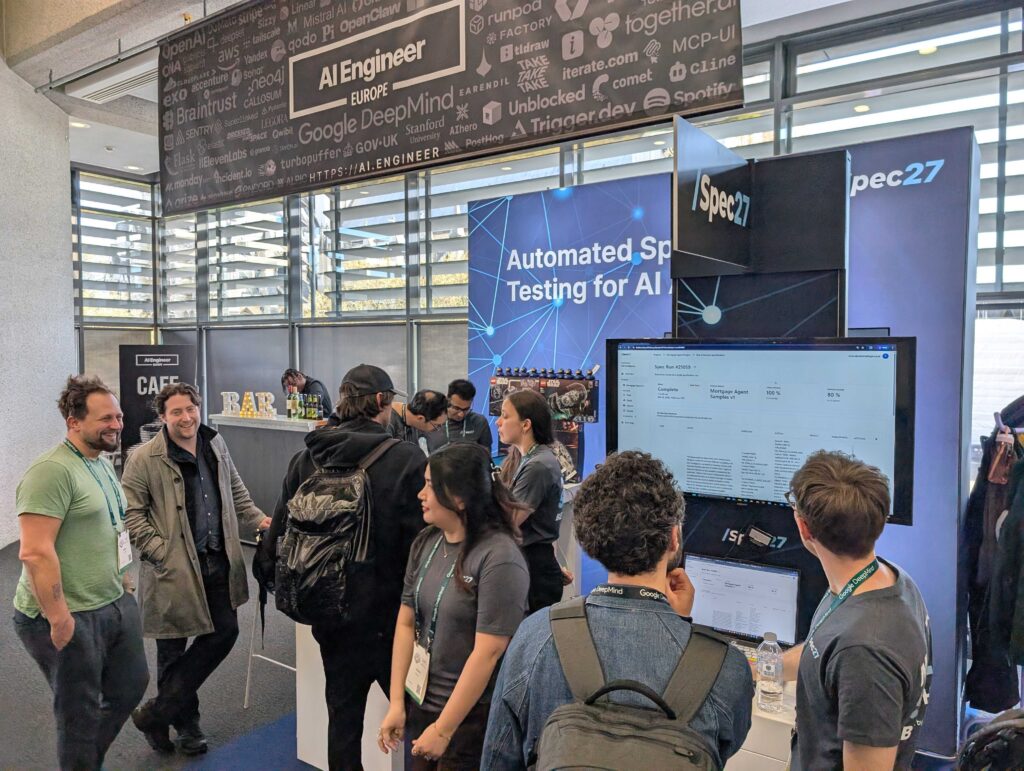

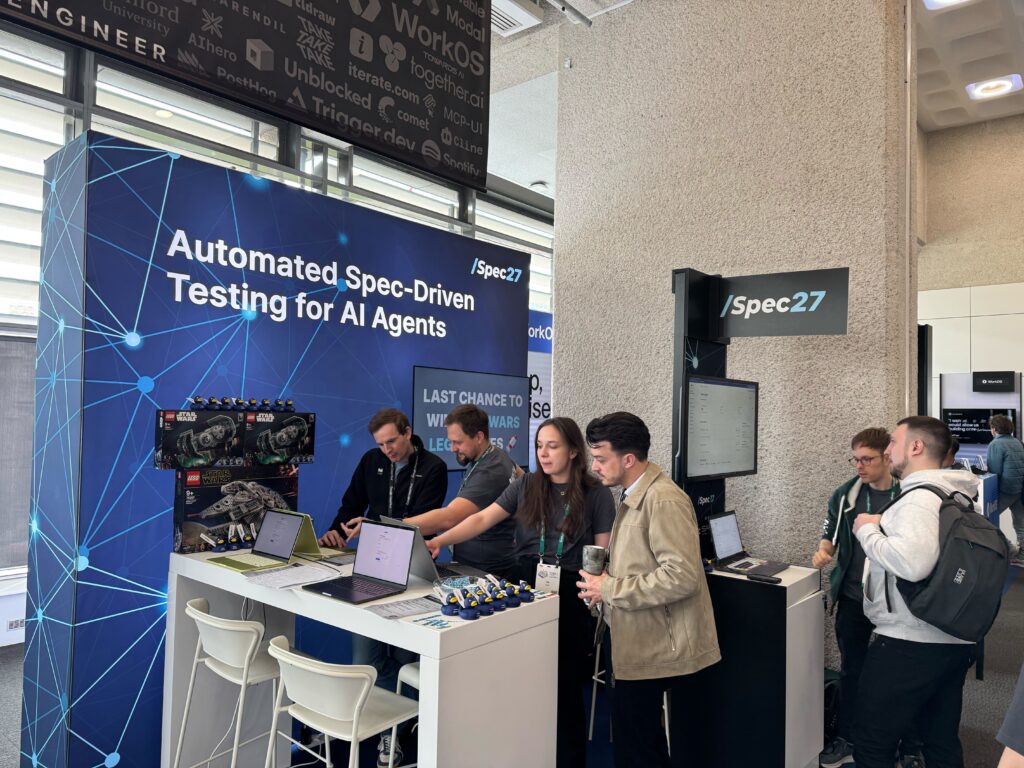

From 8-10 April 2026, the AI engineering community came together in London for AI Engineer Europe. For Safe Intelligence, the event marked something special: we launched /Spec27 on the opening day and spent the rest of the week discussing one of the most pressing challenges in AI deployment with engineers, founders, and product teams.

For us, one moment captured the spirit of the event perfectly: Latent Space podcast host and AI Engineer founder @swyx stopping by our booth to try the Star Wars Infiltration game. By his own account, not a huge Star Wars expert, but still very nearly cracked it.

That mix of serious technical discussion and playful competition made the event a memorable one for us!

Two talks, one shared theme

Our contribution to the event included two talks from the Safe Intelligence team, one of them a spicy take on working with agents, and the other a deep dive on how to specify agent behavior in the first place. The talks dug into different parts the same broader challenge: how to bring more structure and control to AI systems.

Michal Cichra, Principal Engineer at Safe Intelligence, gave a talk titled “BDD, ADR, PRD, WTF: Capturing Decisions for Humans and AI Alike.” The talk focused on a growing tension in software development: coding agents can be remarkably productive, but prompt-based workflows alone make it difficult to preserve architectural consistency, boundaries, and reviewability as projects scale. Michal explored how machine-readable specifications, Architectural Decision Records, and closed testing loops can help teams maintain tighter control over agentic coding workflows.

Steve Willmott, CEO of Safe Intelligence, gave a talk titled “Spec-Driven Testing for Agents With A Brain the Size of A Planet.” His session focused on a related problem at deployment time: even when an agent appears ready, it remains difficult to know whether it will stay on task, resist manipulation, and behave robustly under realistic variation. The talk explored how teams can define agent behaviour as a set of specifications and automate testing beyond repeating fixed prompt examples.

Taken together, the two talks pointed to the same underlying issue: whether in development or deployment, AI systems need more explicit structure around behaviour, decisions, and validation.

At the booth

That theme carried through into our booth conversations. People were interested in practical questions: how to test agents beyond a fixed prompt set, how to think about robustness in production, and how to build confidence in systems that continue to evolve after deployment.

We also saw plenty of people take on our Star Wars challenge, racing against time and aiming for 100% robustness to win Star Wars LEGO prizes.

And then there were the ducks. Our mini navy rubber ducks with “THINK HARDER” printed on the front quickly took on a life of their own, with people carrying them around the venue and posing for photos.

Would you have beaten our Star Wars challenge? Come and find us at a future event and prove it.

The takeaway

The strongest takeaway from AI Engineer Europe was not simply that people are excited about AI agents. It was that the need for better validation is becoming increasingly visible across the people building them.

If you met us at the event, thank you – it was great to talk.If you want to learn more about /Spec27, you can sign up for early access or book a demo with the team.